- Home

- Azure

- Apps on Azure Blog

- Announcing the release of workload profile and managed scaling of Timer Trigger for Azure Functions

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Azure Functions for cloud native microservices hosted on Azure Container Apps was released in public preview during May 2023 at Build. This hosting option lets you create and deploy your Azure Functions code in an environment with other microservices, APIs, and any other container-hosted programs. We hope you have had the opportunity to try running functions containers and have had an enjoyable experience with the offering so far, if not please check out Azure Container Apps hosting of Azure Functions | Microsoft Learn. Help us improve by taking this short survey.

For the public preview launch, we enabled Azure Functions client tooling experiences for deploying and managing Azure Functions on Azure Container Apps resources, which includes:

- Azure CLI, Azure portal, Azure Functions Core Tools, ARM/Bicep templates, and Azure Developer CLI (azd)

- CI/CD tools such as GitHub Actions and Azure pipeline tasks

- Built-in support for Azure Functions triggers and bindings

- Specific platform-managed scaling for HTTP, Azure Queue Storage, Azure Service Bus, Azure Event Hubs, and Kafka triggers

In addition, we also released both the Dapr extension for Azure Functions and a Functions client tooling enablement for managing Dapr configurations.

We are excited to announce support for these popular features in the current preview:

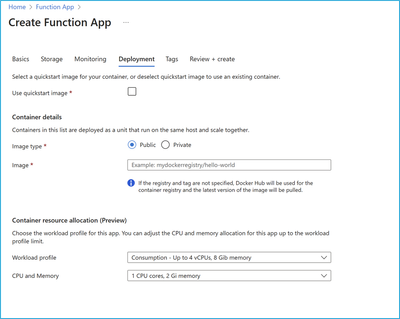

- Workload profiles environment—lets you host your function apps in Consumption and Dedicated plans across workload profiles within the same Container Apps environment.

- Dedicated plan— gives you the option to host your function apps on dedicated/GPU compute resources, where you can choose from a range of compute sizes and types, up to 96 vCPUs and 880 GiB of memory.

- Platform-managed scaling support for Timer trigger Azure Functions —lets you to run a function on a schedule set by you,

while still being able to scale from zero to one and back to zero.

We are lining-up several enhancements which you will be hearing more about soon, including the GA release and these features, currently in public preview, should become generally available soon!!

Consumption and Dedicated Workload Profiles

Workload profiles determine the amount of compute and memory resources available to your apps in an Azure Container Apps environment. You can have multiple workload profiles of varying sizes within the environment, which lets you choose the optimal compute size for each of your hosted function app containers. Workload profiles are designed to help you optimize costs and performance for microservices by selecting either serverless Consumption compute or customized Dedicated compute.

A Consumption workload profile, available by default, so you only pay for resources your apps use.

Dedicated workload profiles provide defined compute resources for your apps. These profiles are ideal for running apps that require more compute and/or memory resources, and you can select from a range of compute sizes and types from 0.5 vCPUs /1GiB up to 96 vCPUs and 880 GiB of memory. Apps running in these dedicated workload profiles use the new Dedicated pricing plan, which is billed per compute instance to provide better cost predictability.

Apps running on dedicated compute can scale down to zero and go up as needed if min replicas are set to zero. You can also use dedicated workload profiles to deploy your function app containers on GPU compute resources which can be used for scenarios such as image/video processing and rendering, transcripts of audio files processing, fine-tuning models and more!

To learn more about the Dedicated plan and workload profiles, see:

- Azure Container Apps hosting of Azure Functions | Microsoft Learn

- Enable Workload profiles in Azure Functions for Azure Container Apps

- Azure Container Apps plan types

- Workload profiles in Azure Container Apps

Timer Trigger for Azure Functions on Azure Container Apps

An Azure Functions timer trigger lets you run your code on a schedule, which is ideal for tasks such as cleaning up databases, sending emails, processing data, generating reports, perform health checks, or monitoring the status of your apps or databases. You can now use timer trigger functions in Azure Functions on Azure Container Apps while taking advantage of platform-managed scaling support that lets you to run a scheduled function and then scale back to zero after the execution completes. state.

Here is the demo video, to deploy a Timer Trigger function app on Azure Container Apps hosting environment using workload profiles – Consumption

Getting Started with Azure Functions on Azure Container Apps.

Deploy your apps to Azure Functions for cloud-native microservices today! To learn more:

- Visit the Getting Started guide on Microsoft Docs.

- Learn more about pricing details from th

- Reach us via our GitHub page - azure-functions-on-container-apps repo.

- Timer Trigger Azure Functions on Azure Container Apps Sample

- Help us improve by taking this short survey.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.